Introduction

Table of Contents

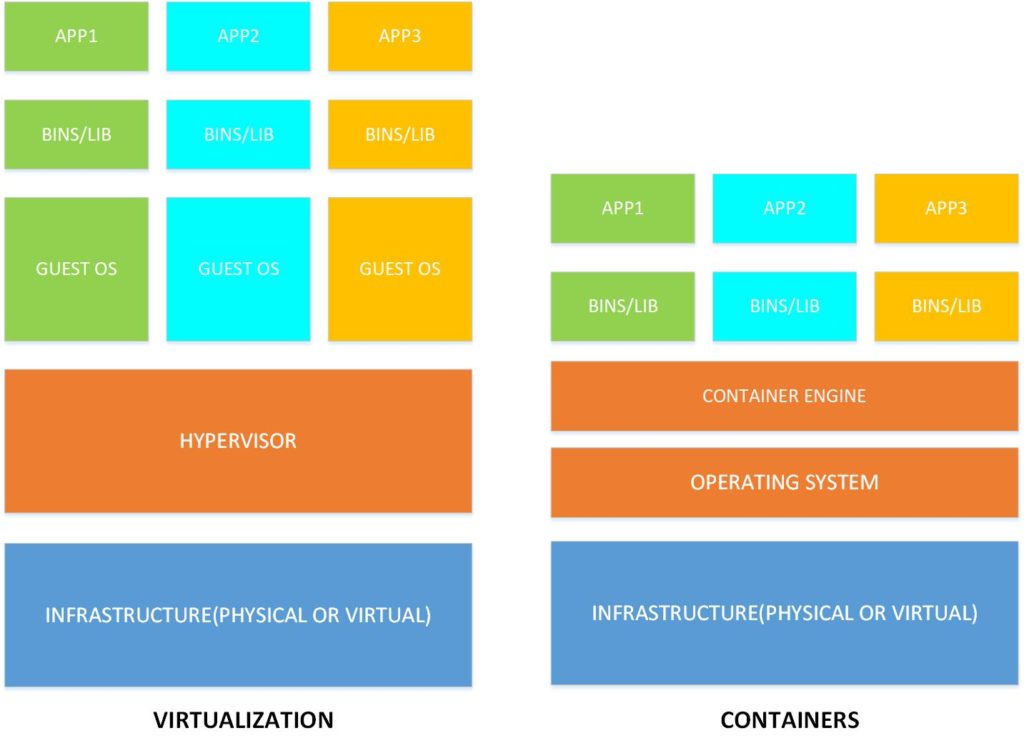

Traditional virtualization includes virtual machines which consumes the same or different operating systems and increasing resource requirements. Containerization is a new type of virtualization technology that allows multiple isolated distributed applications to run within a single operating system. Imagine multiple isolated applications running within the same operating system, whether in a virtual machine, bare-metal server or cloud. The only requirement is that the containers live on the same operating system. In other words, classic virtualization virtualizes the hardware stack while containers virtualize the operating system layer. Containerization is on the fast track. One evidence is American multinational technology – IBM who bought world’s leading provider of enterprise open source solutions – Red Hat for astronomic $34 billion. Red Hat is known for its PAAS solution – Openshift.

How it works

Containerization virtualises the operating system layer and applications can run independently without the need for a separate virtual machine. All applications run isolated on the same system kernel consuming separate resources for CPU, memory, disk, process space, users, networks and storage. All this is made possible by two features of Linux: namespaces and cgroups.

Namespaces provide an isolated environment where processes run independently within a single namespace. Even process IDs can match between namespaces because they are isolated. Namespace limits allow cgroups. These two features are only supported on Linux operating systems, and you are probably wondering how Docker runs on Windows or MAC-OS computers. It runs using a little trick where Docker installs a Linux virtual machine on non-Linux operating systems.

Containerization packs the application along with configuration files, libraries, and dependencies. It all comes in the form of a file called image. Image has everything that an application needs to run on any platform that has a supported operating system.

Benefits

The advantages of containerization is fast start with minimal memory usage, since it skips the entire operating system’s memory stack. You have often been in a situation where a program runs on one platform but does not run on another due to lack of dependencies, libraries or different operating system configuration. There are no problems here, and they are solved by isolation, mobility, agility and scalability.

Security

Containerization provides a high level of security on the container level. Each security leak in one container does not affect the other. Things change with attack on an operating system that is shared between all containers on the same host. Which means that a classic attack on the operating system has an impact on all the containers that consume it. Then we can also say that virtual machines are vulnerable if a hypervisor is attacked. However, hypervisor offers much less functionality than the kernel and the damage that can be done is much less. One of the basic forms of protection are Linux Namespaces with which the administrator can securely protect each container.

Containerization and microservices

The traditional way of delivering an application is a monolithic architecture that has been part of the process since the beginning of the information revolution. But times are changing with user requirements and increased software consumption in a world, where the flaws of the process are coming to light.

Containers work best with service oriented architectures. Microservice architecture includes distributed architecture and service components are accessed remotely via remote access protocols such as: Java Message Service (JMS), Representational State Transfer (REST), Simple Object Access Protocol (SOAP) and many others.

Microservices

Decompose a monolithic application into a microservices

Breaking down a large monolithic application is not an easy job. A typical starting point analysing three-layer architecture:

- Presentation layer (components that respond to HTTP queries)

- Business logic layer (Defines business rules that determine how data can be created, saved and modified)

- Data access layer (components that access infrastructure entities such as a database)

Creating microservices for each layer brings two important benefits: it allows you to develop, install, and scale three microservices applications independently of one another, and exposes a remote API that can be invoked by any microservice.

One of the long-term strategies for decomposing monolithic applications is to convert existing modules into microservices. Each new conversion of a module to a microservice reduces the size of the monolithic application to the level when the monolith itself becomes manageable or just one of the existing microservices. Conversion of the most commonly used modules is always recommended, as it rapidly accelerates the development of each component. An interesting approach is the conversion of modules into a microservice that has a base-in-memory and then installed on a host with sufficient memory resources.

Recommended application decomposition rules:

- The architecture must be stable and the service must implement a small set of related functions

- Each change is reflected on only one service

- Each service has its own API that encapsulates its implementation, which must be executed without affecting clients

- The service must be testable

- Each team that owns the service should be autonomous

Microservice communication

The biggest challenge in microservices transition is change of the communication mechanism. Communication mechanism used by monolithic applications is reduced to the level of process communication and function calls. Microservices basically use two types of communication:

Synchronous protocols (include HTTP / HTTPS where the client sends a request and waits for a response from the other side)

Asynchronous protocols (AMQP – client does not wait for response from other side)

Benefits of microservices

Let’s check some of the great benefits of microservices:

- Smaller hardware footprint, higher hardware utilization due to small container sizes

- Isolation (If one component becomes unavailable, developers have the option of using another service and the application will run independently)

- Much easier scaling, certain containers can be scaled independently

- Easier upgrades, certain containers can be individually upgraded without affecting others

- Easy to understand because they contain a small amount of code

Drawbacks of microservices

Drawback of microservices are reality:

- The complexity of designing a distributed system (it is up to the developers to provide inter service communication and handle partial downtimes)

- Creating automated tests for scenarios covering multiple microservices

- Lots of small parts (Automation implementation required)

- Update scenarios which include multiple microservices can be challenging

- The requirements implementation which involve multiple microservices can be complex

Microservices example

When the huge US company – Walmart faced six million views on its website, engineers quickly realized that their monolithic application would no longer be able to scale so easily. Migration to microservices was performed in 2012 which led to astonishing results. The biggest benefit was reduced downtime and 50% of stored operating procedures by migrating to a virtualized environment. Profits increased by 20% and online orders by 98%.

Another important example of microservices is the Netflix story. Netflix began its transformation from monolith to microservices back in 2009 when it transferred its infrastructure to the AWS cloud. In the first phase, Netflix migrated movie encoding and other non-customer exposed applications. The second phase covered the user environment such as login accounts, movie, and device and configuration selection. The whole process ended with 2011. Today, Netflix uses more than 500 microservices and API gateways that handle more than 2 billion daily requests.