Introduction

Table of Contents

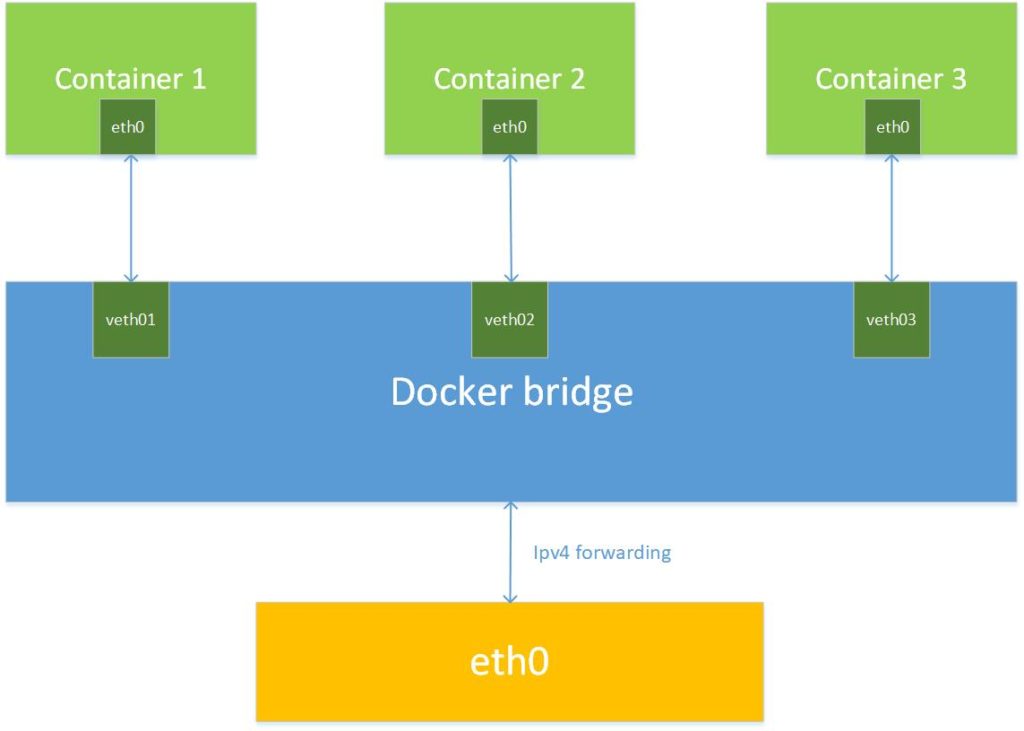

Docker network architecture covers all network scenarios required for successful communication at the local, remote or cluster level. Docker enables it by deploying its native and third-party network drivers. There are various drivers but the default drivers that come with the Docker have local or cluster (swarm) scope. Docker network drivers are a high level presentation of networking capabilities. The physical layer of the network does not need to be specifically configured. At a low level, the Docker network is actually a Linux network because it uses all the capabilities of the Linux kernel to achieve its goals:

- Linux bridge (L2 virtual switch built into the Linux kernel that does the job like a physical switch)

- Network namespace (isolated network space used by each container with its own interfaces, routes and firewall rules)

- Virtual Ethernet adapters (represent network sockets on the container side and Linux Bridge. Represent a link between network namespaces. Docker drivers use virtual adapters to connect containers on the same Linux Bridge)

- Iptables (built-in mechanism in the Linux kernel that allows packet filtering and the L3/4 firewall. Docker network drivers use iptables to segment network traffic, port mapping, traffic markup and load balancing.

Network drivers that come with the Docker installation are: bridge, host, MACVLAN, none and overlay. All drivers have local scope except the overlay driver that works in cluster (swarm) mode.

Docker bridge network

When we run the container, the default network used by Docker is the bridge network. As mentioned in the introduction, the Docker bridge network driver relies on the Linux bridge network.

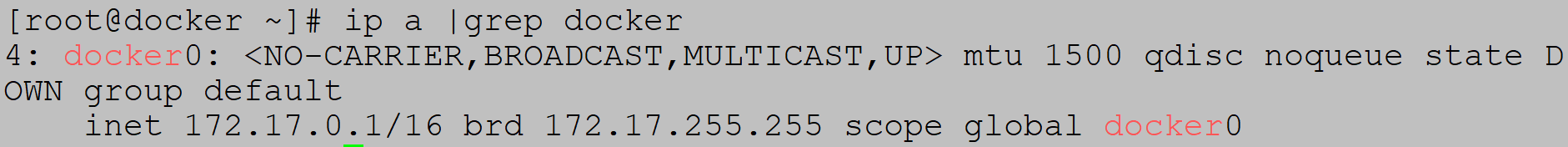

When Docker is installed, run ip a command to check new docker0 network:

Docker0 is Linux bridge network with default network range – 172.17.0.1/16.

You can get list of default Docker network drivers with the docker network ls command:

There are three default networks: bridge, host and none

Example1 (start two containers in same bridge network)

Check the host bridge network subnet (172.17.0.1/16):

Start first container:

When in container press Ctrl+p and then q to exit container without stopping it.

Start second container:

Ip address is 172.17.0.3:

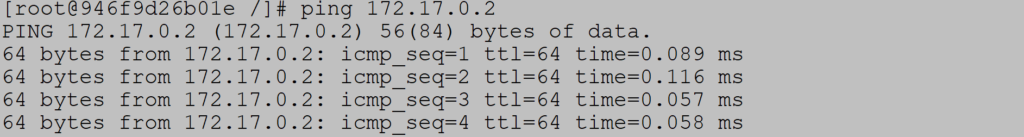

Ping first container:

*If ping command is not supported in Ubuntu image please use Centos image

Check default gateway inside container:

Check default bridge network with docker network inspect command:

Defined subnet is 172.17.0.0/16:

Containers in defined subnet have following ip address:

With ip link command we can see two assets of veth adapters that belong to containers with ip addresses from docker bridge subnet 172.17.0.0/16:

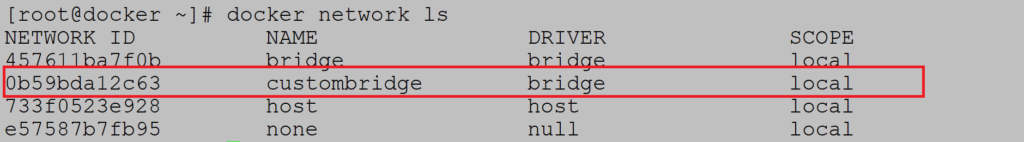

Custom docker bridge network

If we do not want Docker Bridge to automatically assign a subnet, we can create our own bridge network and enter desired subnet range.

Example (Custom bridge configuration)

In this example, we will create four containers. Two containers will be part of the custom network and two will be part of default bridge network. One of the containers will be part of both networks.

Example (Create custom bridge network):

Check the Docker network list:

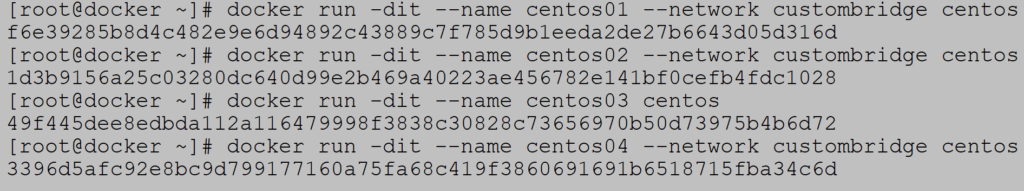

Create four containers:

We will add additional network to centos04 – default bridge network:

Check which containers belong to default bridge network:

And which containers belong to custom bridge network:

Notice that you can ping centos02 from centos01 by hostname:

Reason is DNS server embedded into Docker engine providing hostname resolution.

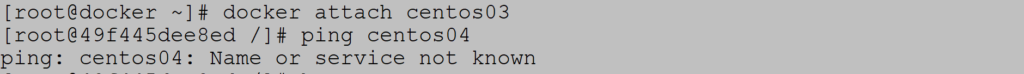

On the other hand, no DNS service exist on default bridge network because of security reasons:

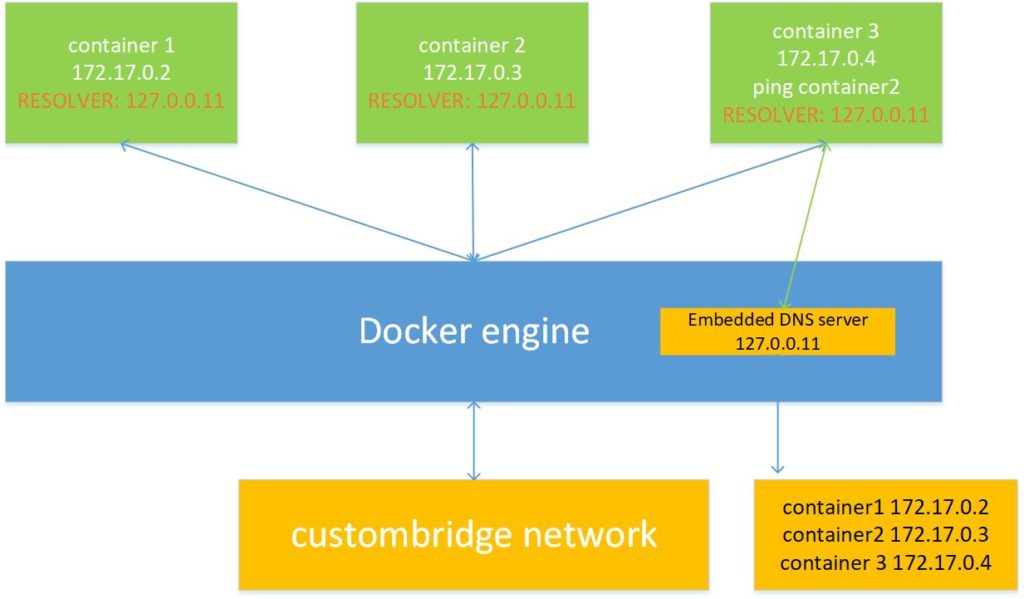

How DNS works in Docker bridge network

Docker uses a built-in DNS server as part of the Docker engine. There is a difference in the use of DNS services in the default and custom bridge network. For security reasons, the default bridge network does not use the built-in DNS server while the custom networks use it. It is important to note that if the containers are not in the same subnet, the built-in DNS server does resolve but forwards the query to the default DNS server.

The only way to communicate within the default bridge via the hostname is to connect the containers directly via the –link parameter. However, this is an outdated method that is no longer recommended. If there are no DNS servers defined in the resolv.conf file, the container adds Google DNS servers 8.8.8.8 and 8.8.4.4.

Default network bridge network

Containers use DNS services directly from the host, file /etc/resolv.conf file is directly copied from the host to the container. Docker daemon constantly listens for changes in the host file and writes them to the container. It is important to note that changes can only be synchronized if the container is stopped.

When you run a containers there is some activity in DNS world:

1) The file on host /etc/resolv.conf is copied to the container

2) A file hostname (/ etc / hostname) is created and a universal name is assigned to the container

3) Filename /etc/hosts created

Example (container file check)

Check the content of resolv.conf file:

Start Ubuntu container and verify that content of resolv.conf file is the same as one on the host:

Custom network bridge network

Things change when defining a custom bridge network that uses the services of a built-in DNS server that is part of the Docker engine. The file resolv.conf file is populated with the IP address of the embedded DNS server which is always 127.0.0.11. This service is only available between containers in the same network. For everything else, the embedded DNS server sends requests to the default DNS server.

Example (DNS server in custom bridge network)

Start centos container in custom network and check content of resolv.conf file:

Custom DNS

We can always define custom DNS either through the –dns parameter or by typing it in the /etc/docker/daemon.json configuration file. Example shows configuration of custom DNS when during container start:

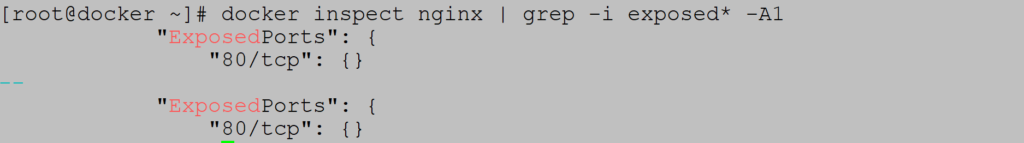

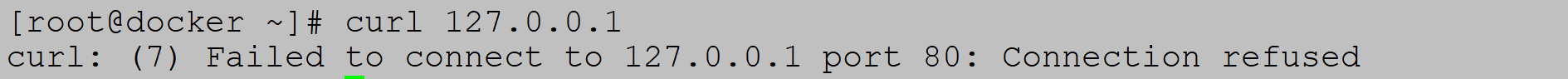

Docker expose port

In the docker bridge network, due to the additional network layer, it is necessary to configure port forwarding for all services running over HTTP and HTTPS protocols. All ports are available on the host, but inside the container which runs on additional network namespace, some things need to be configured to allow applications to be available outside the container itself. Let’s look at the basic rules:

- Docker file which is used for image build sometimes contains one or more lines – EXPOSE portnumber

However, this is not enough. In order for a port to be forwarded, you must specify parameter –p containerport: hostport during container start. Another option is a flag – P where all ports listed in the Docker file are mapped to random ports.

- You can ignore everything and just use the -p flag that will handle both EXPOSE and port forwarding in one step.

Example1 (nginx container with port forwarding)

Content of nginx Docker file has some exposed ports defined:

If we run nginx without –p switch things won’t work:

Now, if we put –p switch to port forward port 80 to port 8080 on host things work:

Access to containers within the same and external network

Containers within the same network have no restrictions or firewalls between. Communication across all ports flows smoothly. Things change if the containers are in different networks where inbound traffic is allowed but outbound traffic is allowed. The same thing is true when accessing a container from external sources. Inbound traffic is explicitly approved by the technique in the previous article – port forwarding (parameter -p).

Start two containers in default bridge network:

Attach to centos01 and install net cat tool:

Start listening on port 80:

Attach to centos, install telnet and check if port 80 is opened:

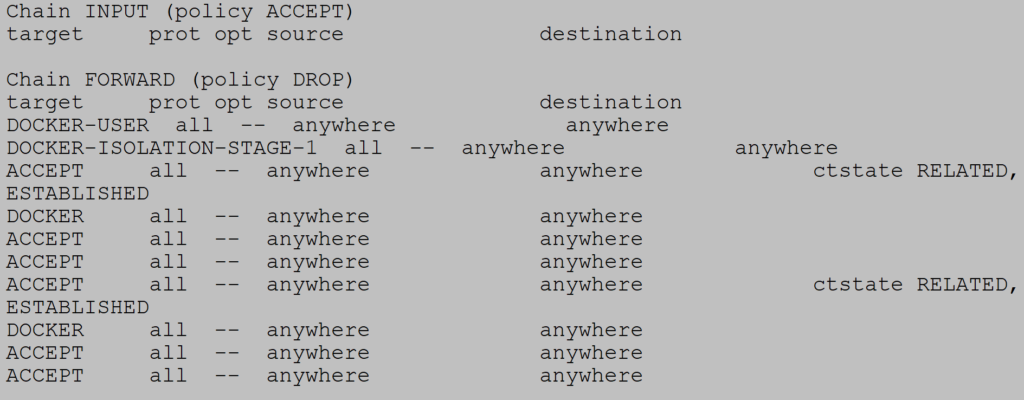

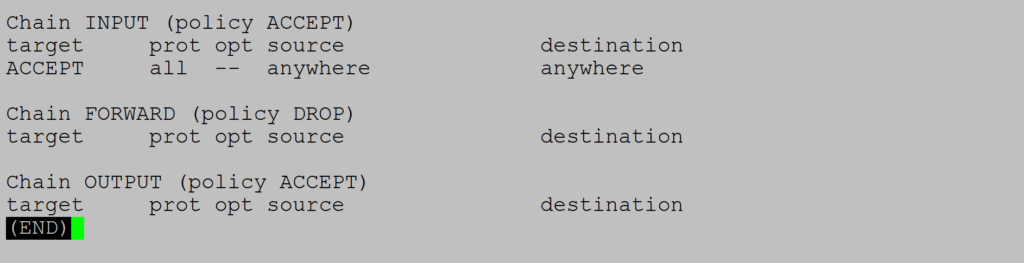

Docker and firewalld

You are probably wondering how firewalld works with Docker? We know that Docker uses iptables for network container segmentation. Firewalld is not in same story and not synchronized with iptables rules for Docker. During OS start, firewalld take into account all Docker rules for iptables. Things go bad during firewalld restart during OS runtime. Firewalld removes all Docker iptables rules breaking Docker communication. Docker service restart must always follow firewalld restart for changes to populate Docker chain in iptables.

Check Docker chain rules in iptables:

Firewalld restart:

Iptables are empty:

Docker service restart:

Things are good as before:

*Take into account that all running containers will stop during docker restart

Docker network host

Docker network host driver does not do network segmentation like bridge network. Container is equal member of native host network. Without additional network layer, network part of the container is no longer isolated, but all other container components are. All containers in the host network are able to communicate over the host network. Each container is displayed on the host as a process that communicates with the other container (process). It is important to know that all containers share same IP address of the host.

The advantage of this configuration is better performance because no extra network layer. Disadvantage is that all container services can occupy all the popular ports on the host. Imagine several containers run and take all popular ports. This type of Docker network only works on Linux operating systems. Containers with this network cannot access any other network.

Example (start nginx container in host network)

Check if port 80 is free:

Start nginx container:

Check if nginx is running on port 80:

Double check with netstat command:

Docker network MACVLAN

Docker MACVLAN network is also part of host network like Docker host network but all similarities stop there. In this type of network each container get its unique IP address form host subnet range. MACVLAN connects containers in the same network, if you want different subnets, external routing is required. It supports VLAN 802.11 tag protocol where containers can belong to different VLANs. This technique is used for applications that have a low latency requirements and need to have addresses from a host subnet. The disadvantage is the limited containers mobility because they are tied to the network configuration of the existing infrastructure.

Example (Start container in MACVLAN network)

Check the subnet you want to use for the containers:

Create MACVLAN network driver which includes 192.168.78.0/24 network:

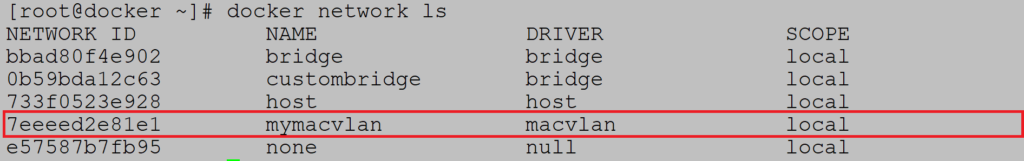

Check that MACVLAN network is listed:

Create two containers within subnet:

Check if IP address is assigned:

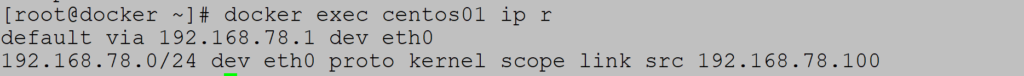

Check default gateway:

Isolated network

Containers can be run offline, which means that container does not have any defined network adapter except loopback. Containers can only be part of this network. The parameter –network none is used for this:

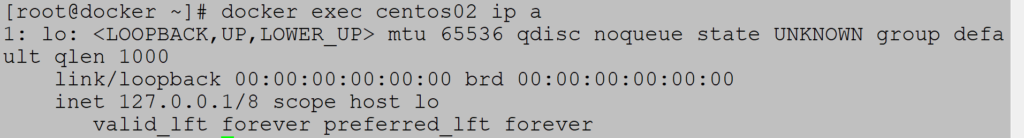

Check that there is no network adapter defined except loopback: