Introduction

Table of Contents

Docker is a platform for developing, migrating and running applications in an isolated section of the operating system called a container. Docker runs on a local laptop, physical and virtual machines or cloud. Docker is great choice for the rapid CI/CD process. Although originally designed for Linux machines, Docker can run on both Windows and macOS operating systems. This is enabled by the architecture – Docker client and server. The Docker client can be installed anywhere while the server is installed in a form of Linux virtual machine.

Docker benefits

Developers like to use Docker because of its portable nature and a small footprint. Especially because they can share local code with colleagues using Docker containers. Prior to production, containers can be easily run in a test environment with set of tests and validation steps. Docker is also credited for rapid deployment of applications, because each container is a process and not an operating system. Isolation ensures that each container has its own set of isolated resources. Each container runs in its own space which means that the neighbours do not see each other.

Docker drawbacks

Docker is stateless in nature, which means that when a container is shut down, no data remains. In order to save the container state, certain actions, such as configuring persistent storage must be performed. Permanent storage is configured on the container that mounts the volume on the host.

Docker services

To extend container scope to multiple Docker hosts and form the cluster, we use Docker services. Docker services allow container scaling across multiple Docker daemons. All Docker daemons work in swarm mode. The benefits of the services are the ability to define the desired state, such as the number of replicas and load balancing across all worker nodes.

Docker architecture

Before learning about the components of the Docker architecture, let’s take a look at Docker engine.

Docker engine

The software used to host the container is called Docker Engine. It has three components:

- Server (Docker daemon – background process that responds to Docker API requests and creates Docker objects: images, containers, network and more.)

- Client (CLI interface – commands to server component)

- REST API (specified interface used by the component server, via UNIX socket or network protocol)

Docker daemon

Docker daemon is the brain of the entire operation which sits on server component. When running the run command, Docker runs the container, the Docker client converts the command to the API request and sends it to the Docker daemon. The Docker daemon can receive commands from multiple Docker clients and start each container using a template, i.e. the image used by default from the Docker Hub.

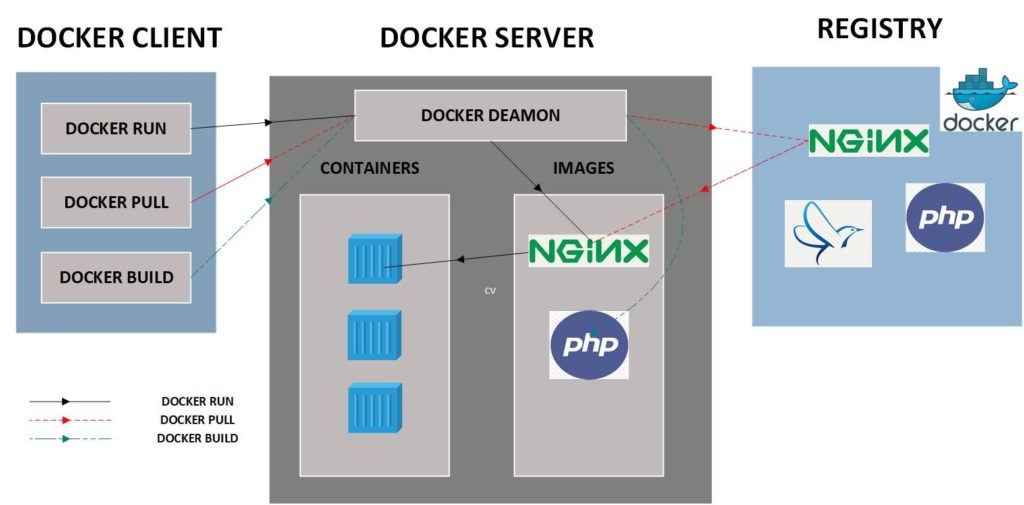

Docker architectureThe Docker architecture consists of client-server model with following components: Docker client, Docker Server and Docker Registry.

Docker host

Analysing the Docker engine, the Docker host is place where the server component resides – the daemon that listens to API requests such as Docker run, Docker pull, and Docker build and creates the requested Docker objects.

Docker CLI

The Docker CLI contains a set of commands that the API requires to be sent to the Docker server for processing.

Let’s see what happens when we run the docker run –i –t apache / bin/bash command:

1) If you do not have an apache image on the local Docker server instance, Docker pulls the image from the configured registry. If you just installed Docker, the default location from which the image is pulled is Docker Hub. The equivalent command used is docker pull apache.

2) Docker creates a new container. The equivalent command used is the docker container create apache

3) File system is allocated in read-write mode.

4) The container is configured with network interface. Default interface is attached to host network network.

5) Docker runs the container and interactive /bin/bash command line.

Docker client

Docker client can interact with multiple Docker daemons. The advantage is that client can be installed anywhere: Linux, Windows or macOS. What is important is that the Docker server is always installed as a Linux machine that receives requests from the client. In this way the client can be completely separated from the server because communication is established via remote protocols.

Docker objects

Docker containers

We can say that container is a Docker organizational unit. In programming terminology, an image is a class and a container is instance of that class. Using a docker client, containers can be started, stopped, migrated, or deleted. We can connect them to a network or storage or even create a new image from the current state. There is analogy with physical containers because of their main functionality – the mobility or the ability to switch to any platform. Of course, the real power is possibility to run multiple instances of same containers or copy of the same image.

Docker image

Images are immutable files that can be considered as containers snapshot. Images are templates with instructions on how to make a Docker container. Images are usually stored on some of the public or private registers. Because they can take up a lot of space, they are made up of layers of smaller images.

Docker registry

The Docker registry is where the image is stored. By default, Docker is configured to merge and pull images from the Docker Hub. You have the choice to use any registry and even create your private one. Using commands such as docker pull or docker push, your image is sent to a configured registry.

Finally

Docker uses already known Linux technologies such as namespaces and control groups. It is also important to note the way in which layers are created when handling containers. This is made possible by a feature called UnionFS. We already know that the image is immutable, and special layers of file systems are created when making changes in container. UnionFS allows you to take different file systems and create a union of their content. The upper layers take precedence over lower layers. In that way container can be changed in runtime but when stopped, all changes are purged from upper layer. If you want to save the container state, create new image or configure persistent storage.